I am a first-year PhD student advised by Prof. Rose Yu and Prof. Loris D'Antoni. My research interests broadly lie at the intersection between machine learning and formal methods. Recently, I am particularly focusing on controllable generation with Large Language Models, with applications including (but not lmited to) AI4Science and Code Generation.

Previously, I have worked with Prof. Guy Van den Broeck at UCLA on leveraging Tractable Probabilistic Models for controllable generation.

Warning

Problem: The current name of your GitHub Pages repository ("Solution: Please consider renaming the repository to "

http://".

However, if the current repository name is intended, you can ignore this message by removing "{% include widgets/debug_repo_name.html %}" in index.html.

Action required

Problem: The current root path of this site is "baseurl ("_config.yml.

Solution: Please set the

baseurl in _config.yml to "Education

-

University of California, San DiegoDepartment of Computer Science and Engineering

University of California, San DiegoDepartment of Computer Science and Engineering

Ph.D. StudentSep. 2025 - present -

Harvey Mudd CollegeB.S. in Computer Science and MathematicsAug. 2021 - May 2025

Harvey Mudd CollegeB.S. in Computer Science and MathematicsAug. 2021 - May 2025

Experience

-

MicrosoftContract Software EngineerAug. 2024 - May 2025

MicrosoftContract Software EngineerAug. 2024 - May 2025

Honors & Awards

-

Google CSRMP Awardee2023

-

HMC Dean's List2021-2025

News

Selected Publications (view all )

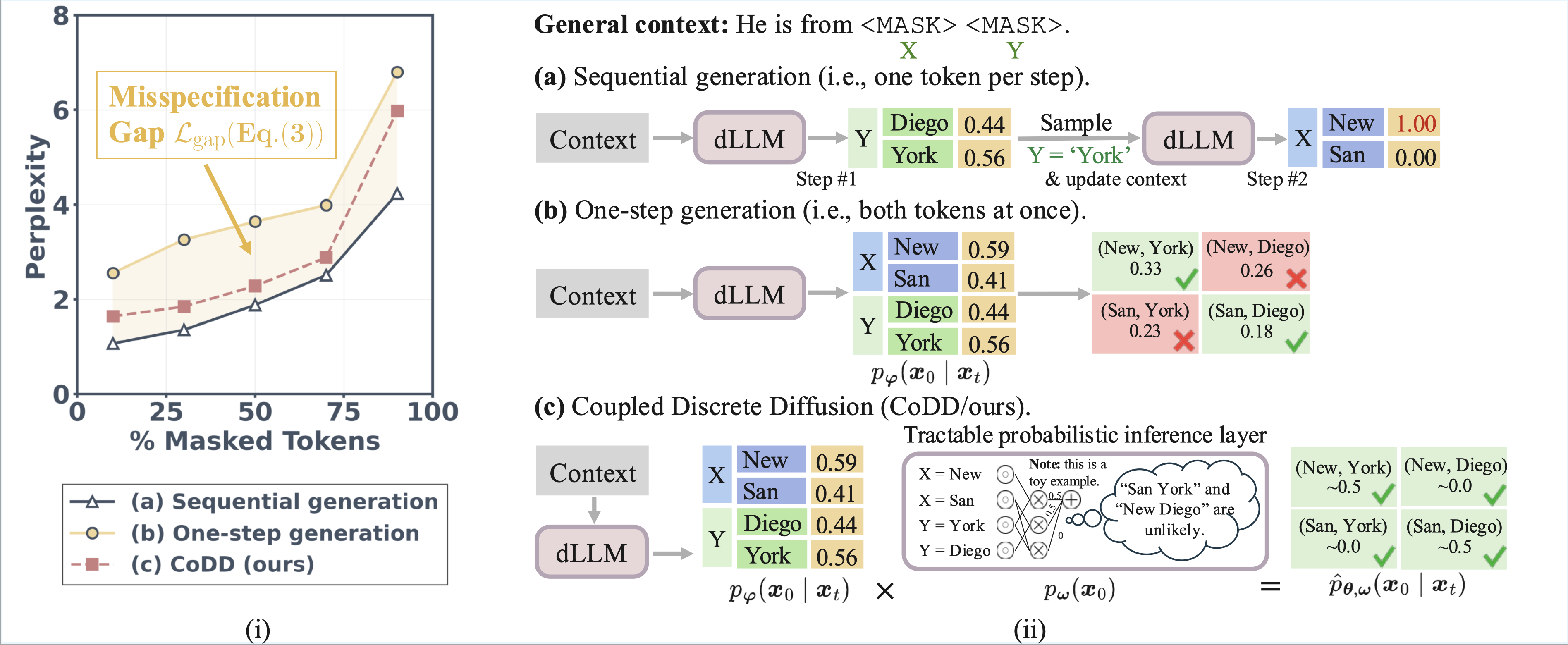

Breaking the Factorization Barrier in Diffusion Language Models

Ian Li, Zilei Shao, Benjie Wang, Rose Yu, Guy Van den Broeck, Anji Liu

Under review. 2026

We propose Coupled Discrete Diffusion (CoDD), a hybrid framework that breaks this barrier by replacing the fully-factorized output distribution with a lightweight, tractable probabilistic inference layer.

Breaking the Factorization Barrier in Diffusion Language Models

Ian Li, Zilei Shao, Benjie Wang, Rose Yu, Guy Van den Broeck, Anji Liu

Under review. 2026

We propose Coupled Discrete Diffusion (CoDD), a hybrid framework that breaks this barrier by replacing the fully-factorized output distribution with a lightweight, tractable probabilistic inference layer.

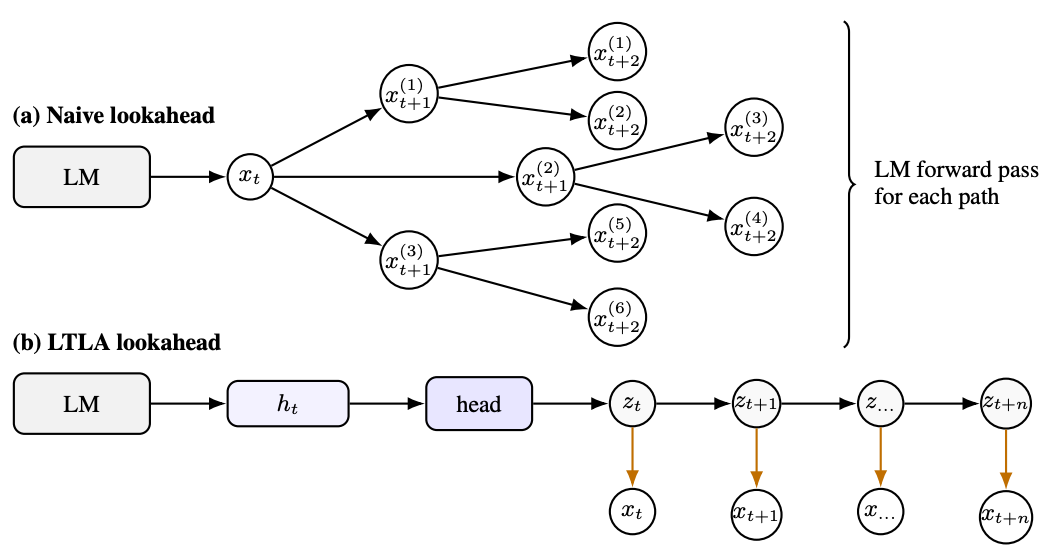

Learning Tractable Distributions of Language Model Continuations

Gwen Yidou-Weng, Ian Li, Anji Liu, Oliver Broadrick, Guy Van den Broeck, Benjie Wang

ArXiv 2025

We propose Learning to Look Ahead (LTLA), a hybrid approach that pairs the same base language model for rich prefix encoding with a fixed tractable surrogate model that computes exact continuation probabilities.

Learning Tractable Distributions of Language Model Continuations

Gwen Yidou-Weng, Ian Li, Anji Liu, Oliver Broadrick, Guy Van den Broeck, Benjie Wang

ArXiv 2025

We propose Learning to Look Ahead (LTLA), a hybrid approach that pairs the same base language model for rich prefix encoding with a fixed tractable surrogate model that computes exact continuation probabilities.

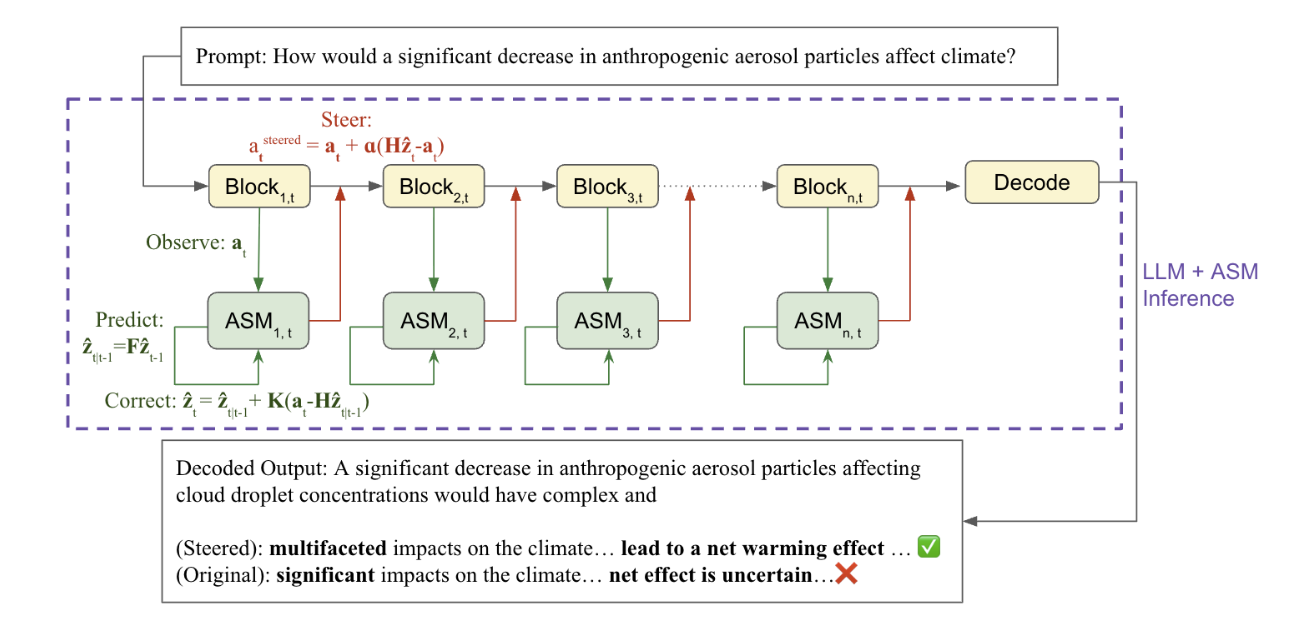

Steering LLMs’ Reasoning With Activation State Machines

Ian Li, Philip Chen, Max Huang, Andrew Park, Loris D'Antoni, Rose Yu

FoRLM @ NeurIPS 2025ArXiv 2025

We introduce Activation State Machine (ASM), an lightweight dynamic steering mechanism that learns the latent dynamics of ideal reasoning trajectories and applies context-aware interventions at inference time.

Steering LLMs’ Reasoning With Activation State Machines

Ian Li, Philip Chen, Max Huang, Andrew Park, Loris D'Antoni, Rose Yu

FoRLM @ NeurIPS 2025ArXiv 2025

We introduce Activation State Machine (ASM), an lightweight dynamic steering mechanism that learns the latent dynamics of ideal reasoning trajectories and applies context-aware interventions at inference time.